HOW WE DO IT

Platform Tools.

To achieve the best results, we team up with stakeholders and domain experts during the implementation, using the platform tools designed for this purpose

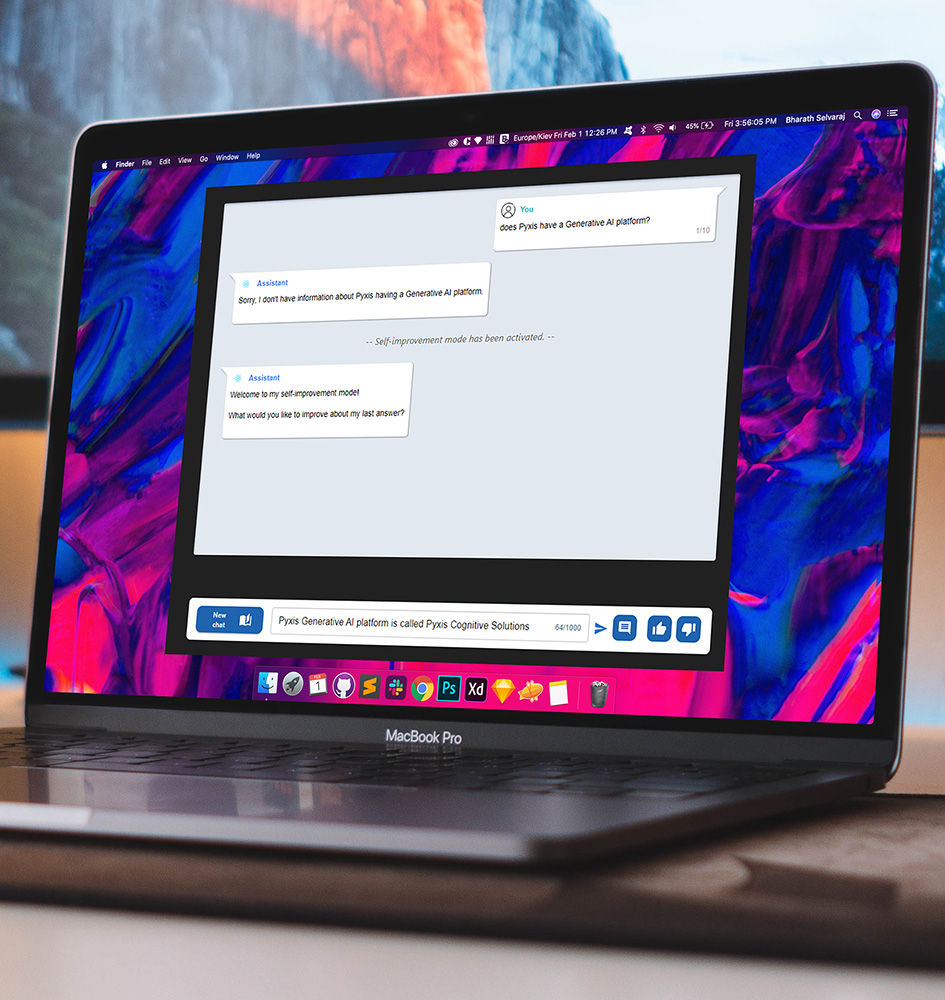

Self-improvement Mode.

During the construction stage, the functional expert can decide to enter self-improvement mode, to dialog with the assistant, give feedback, suggest desired answers, make corrections. This process allows the assistant to automatically modify different components of the implementation until reaching the answer desired by the expert.

Implementation Portal.

Where assistants, techniques, tools (default and custom), configurations and prompting are defined.

Administration UI.

Where the client can manage documents and access interaction monitoring.

Evaluation Module.

Component used in implementations to measure the performance of the assistant at each stage of the process. Using LLM-based metrics, embedding-based metrics, and traditional NLP metrics, we generate reports for the client with the assistant's performance at a given moment. After the implementation stage, it is periodically run as regression tests.

Interaction Monitoring.

Where the client can see in real time (and in pre-built dashboards) all interactions with the wizard and user feedback.

About

Cognitive Solutions.

Our platform builds assistants that can answer questions about a specific domain. For example, a set of regulations of an organization, or articles and studies of an institution. The assistants can also guide the user through one or several processes of an organization, such as the carbon footprint certification process or assembling a customized travel package tailored to the user's needs.

The result is an assistant that reliably responds in a "tuned" way following the style and feedback of the domain and/or process experts within the organization.

Rodrigo Sastre

Co-founder - Linkedin Profile

Ignacio Sastre

Co-founder - Linkedin Profile